QUBIT AI | quantum & synthetic ai

Electronic Language International Festival

July 3rd to August 25th

Tuesday to Sunday, 10am – 8pm

FIESP Cultural Center

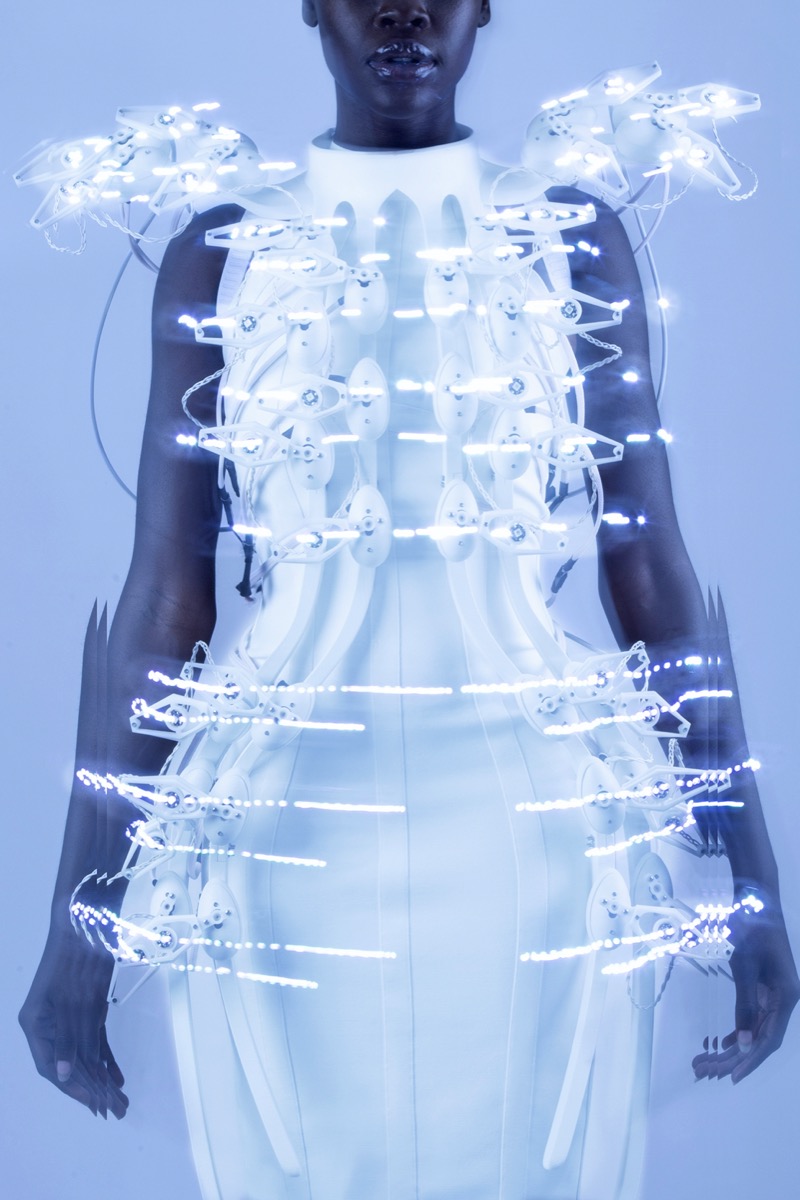

Design: André Lenz

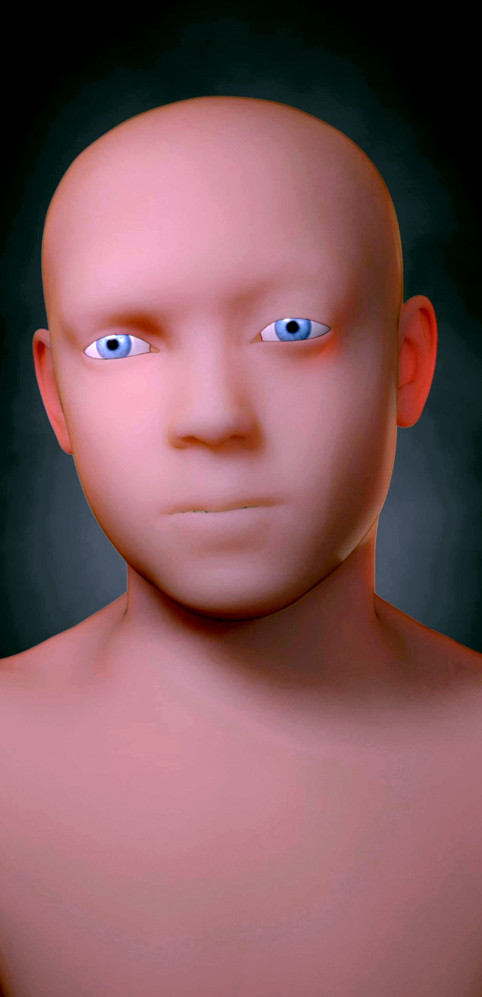

Image: Iskarioto Dystopian AI Films – Athena

QUBIT AI

In its 25 years of existence, the International Electronic Language Festival (FILE) is an internationally renowned Brazilian project that since 2000 has explored the intersection between art and technology. With more than two decades of history, the festival stands out for fostering exhibition spaces and debate about artistic innovations driven by disruptive and innovative technologies, inviting the public to get involved with experimental forms of art that challenge the boundaries of conventionality. Currently, two of these technologies stand out in the contemporary scenario: the accelerated development of quantum computing and artificial intelligence corroborated by synthetic data.

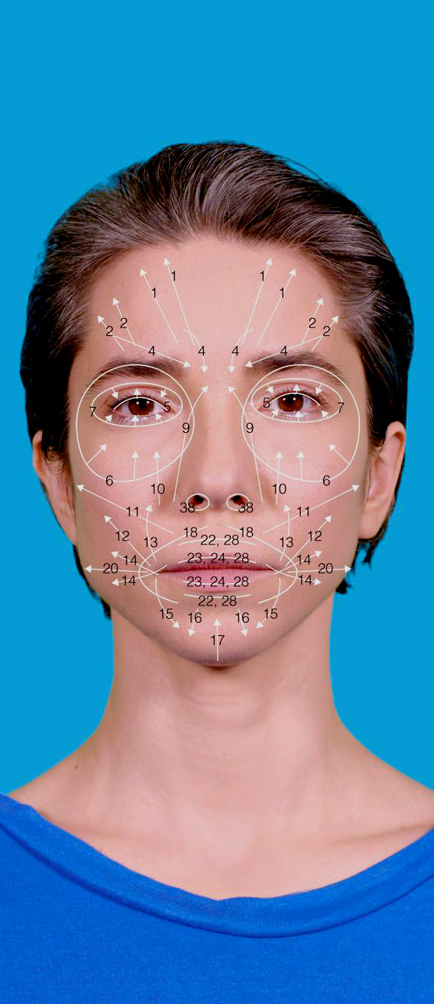

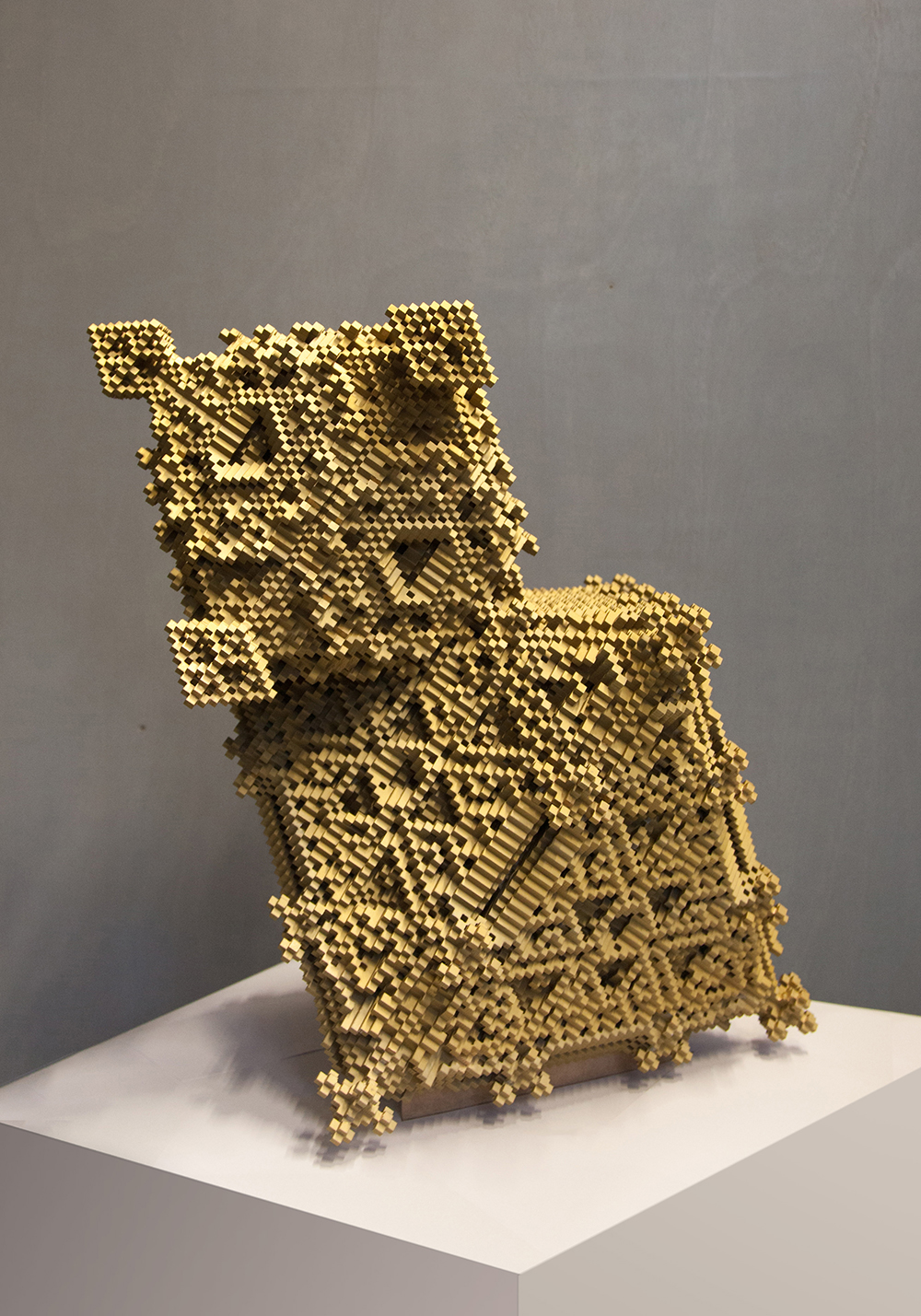

Quantum computing, an emerging revolution in the technological field, offers a new range of creative possibilities for contemporary artists. This new era allows the exploration of unprecedented frontiers through a new computational format that consists mainly of quantum superposition and entanglement, a new field of exploration for synthetic computer science, as well as for the arts in general; on the other hand, artificial intelligence, fueled by synthetic data, offers artists a new way of making and understanding art, opening up space for new forms, concepts and artistic expressions.

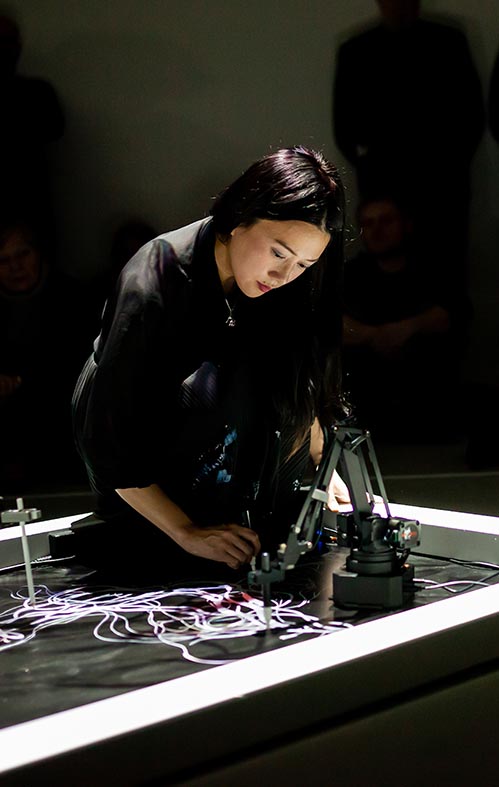

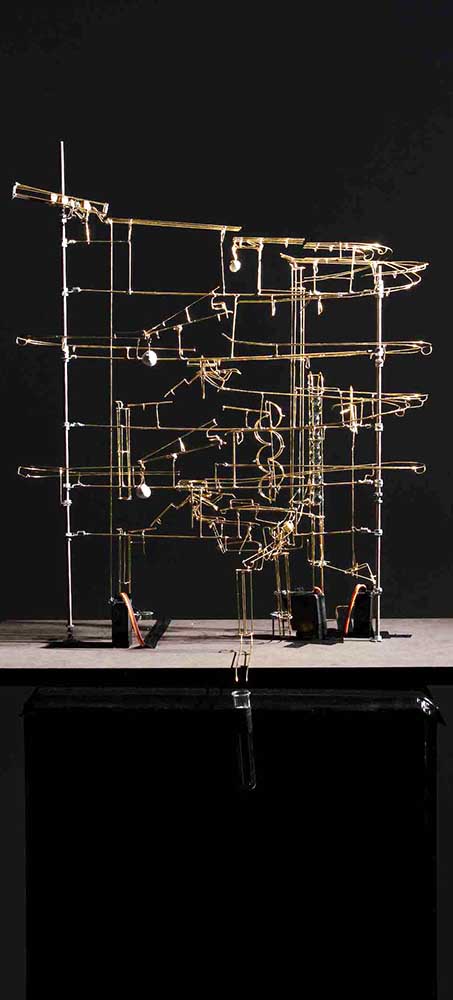

Entitled QUBIT AI, the exhibition delves into this unexplored territory presenting a selection of works of art resulting from the connection between artistic creation and technology, proposing a theoretical reflection on what the interrelationship between quantum computers and synthetic artificial intelligence will be.

Visitors will be invited to experience immersive installations, experimental videos, digital sculptures and other forms of interactive art, which intertwine reality and imagination. The exhibition encourages reflections on the influence of technology on art and contemporary society, while at the same time providing an environment to compare already established technological arts (analog and digital) with the possible futures of art in the synthetic era, enhanced by quantum computing. The QUBIT AI exhibition at FILE SP 2024 transcends the mere presentation of works of art; it is a journey to the limits of human creativity, driven by the convergence of art, science and technology.

Ricardo Barreto and Paula Perissinotto

co-organizers and curators of FILE

International Electronic Language Festival