Memo Akten and Katie Peyton Hofstadter

Embodied Simulation

‘Embodied Simulation’ is a multiscreen video and sound installation that aims to provoke and nurture strong connections to the global ecosystems of which we are a part. The work combines artificial intelligence with dance and research from neuroscience to create an immersive, embodied experience, extending the viewer’s bodily perception beyond the skin, and into the environment.

The cognitive phenomenon of embodied simulation (an evolved and refined version of ‘mirror neurons’ theory) refers to the way we feel and embody the movement of others, as if they are happening in our own bodies. The brain of an observer unconsciously mirrors the movements of others, all the way through to the planning and simulating execution of the movements in their own body. This can even be seen in situations such as embodying and ‘feeling’ the movement of fabric blowing in the wind. As Vittorio Gallese writes, “By means of a shared neural state realized in two different bodies that nevertheless obey to the same functional rules, the ‘objectual other’ becomes ‘another self’.”

QUBIT AI: Valentin Rye

Space Odyssey 2002

FILE 2024 | Aesthetic Synthetics

International Electronic Language Festival

Valentin Rye – A Space Odyssey 2002 – Denmark

This video offers a surrealist take on futuristic sci-fi concepts from the 60s and 70s. Imagine a future colonization of Mars where interior design is given an avant-garde twist. It was created by brainstorming visual ideas, generating countless images, refining the best ones and assembling them into clips. After careful selection, these clips have been organized into a cohesive timeline, accompanied by atmospheric music to enhance the overall experience.

Bio

Valentin Rye is a self-taught machine whisperer based in Copenhagen, deeply passionate about art, composition and the possibilities of technology. He has been involved in AI and neural network image manipulation since starting DeepDream in 2015. While his IT work lacks creativity, he indulges in digital arts during his free time, exploring graphics, web design, video, animation and experimentation with images.

QUBIT AI: Luigi Novellino (aka PintoCreation)

Brain Entity

FILE 2024 | Interator – Sound Synthetics

International Electronic Language Festival

Luigi Novellino (aka PintoCreation) – Brain Entity – Italy

In a laboratory, a brain-like organism grows rapidly, integrating with technology and evolving into a giant entity that manipulates objects with its mind. He hypnotizes the city, absorbing energy and creating patterns. Efforts to stop him fail, signaling a battle to understand existence. This symbolizes a new era of coexistence, urging us to embrace interconnectivity and navigate a more integrated world.

Bio

Fascinated by the limitless domain of AI, Luigi Novellino adopts the title syntographer, a term that resonates deeply within the community. The artist often asks himself: “am I an artist?” Art, in his view, defies rigid definitions or limits; it represents a fluid expression of creativity that transcends labels. The artist’s ultimate goal is to awaken something in the viewer, provoking thoughts and evoking emotions.

Credits

Music: Odyssey by John Tasoulas

Dragan Ilic

Re)Evolution

With the machine programed to draw, the robot becomes a medium for interaction and for “symbiosis” with the artist, creating a kind of “hybrid body” of man and machine, whose nervous system and brain waves administer “software commands” to the robot during the drawing performance. A key actor in the exhibition will be the new model of the KUKA KR 210 robot, that has a multi-functioning performative role: from drawing, experimental dance, music – through the production of industrial sound, and a six channel video projection that documents Ilić’s projects.

Amigo & Amigo

Affinity

Affinity is an immersive interactive light and sound installation inspired by the human brain. Each light globe represented a memory, as people approached Affinity different memories could be heard. When people touched the memory a light would trigger, the longer they touched the further their light would travel throughout the sculpture. Affinity features 62 different colour combinations and 112 points of interaction.

FUSE

FRAGILE

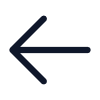

Fragile is an audio-visual installation that aims to investigate the relationship between stressful human experience and the transformations that occur in our brain. Recent scientific research has shown that neurons belonging to different areas of our brain are affected by stress. In particular stress causes changes in neuron circuitry, impacting their plasticity, the ability to change through growth and reorganization.

Our process exploits the scientific data provided by the Society for Neuroscience and elaborates this information trying to show the effect of external interactions on our nervous system and ultimately on our relationship with the outside world. In order to achieve this we developed an artwork composed of different digital representations following one another, branching into 5 screen projections.

PAUL FRIEDLANDER

بول فريدلاندر

ポール·フリードランダー

The Brain Unravelled

“The installation is a development of the long standing wave series. It is the first light sculpture to be lit entirely by LEDs. Many thanks to my electronics engineer, Louis Norwood, for helping realise some ideas I have been contemplating for years and finally the technology has matured so I now have a completely new computer controllable light source.” Paul Friedlander

fabrica

recognition

RECOGNITION

Recognition, winner of IK Prize 2016 for digital innovation, is an artificial intelligence program that compares up-to-the-minute photojournalism with British art from the Tate collection. Over three months from 2 September to 27 November, Recognition will create an ever-expanding virtual gallery: a time capsule of the world represented in diverse types of images, past and present.A display at Tate Britain accompanies the online project offering visitors the chance to interrupt the machine’s selection process. The results of this experiment – to see if an artificial intelligence can learn from the many personal responses humans have when looking at images – will be presented on this site at the end of the project.Recognition is a project by Fabrica for Tate; in partnership with Microsoft, content provider Reuters, artificial intelligence algorithm by Jolibrain.

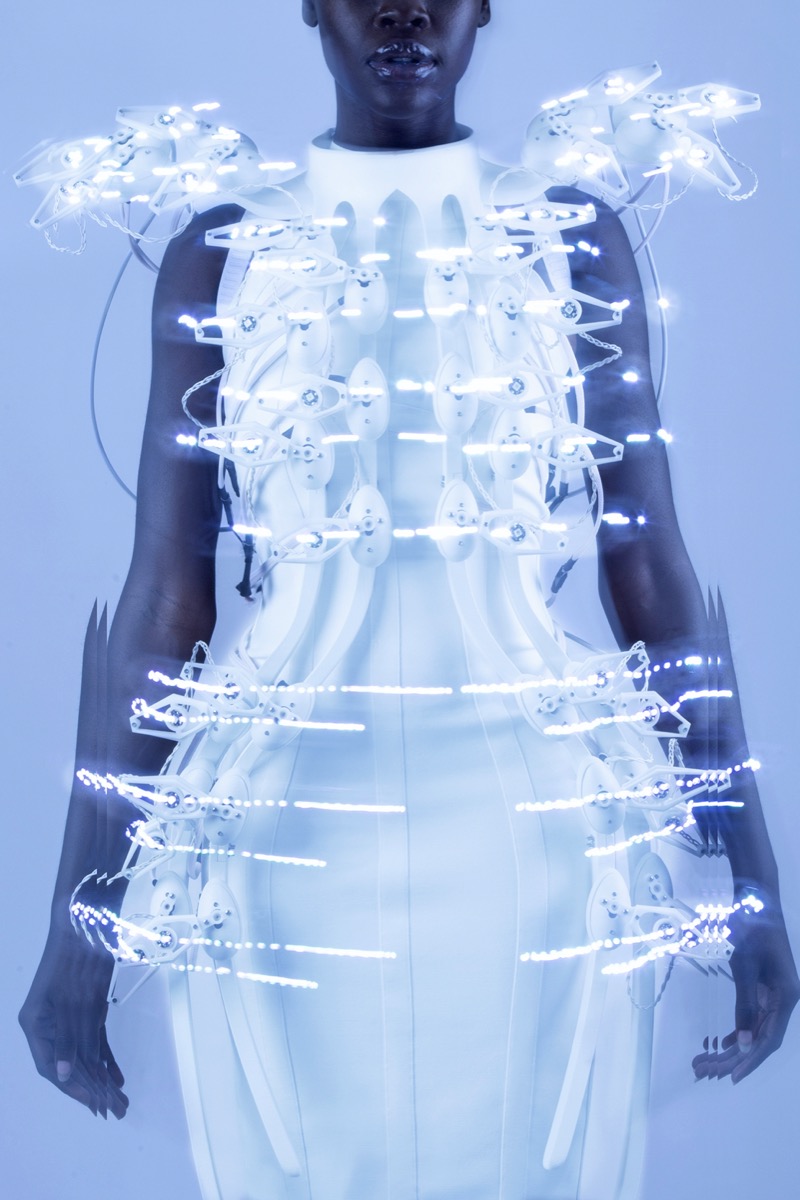

ANOUK WIPPRECHT

PANGOLIN DRESS

The Pangolin Scales Project demonstrates a 1.024 channel BCI (Brain-Computer Interface) that is able to extract information from the human brain with an unprecedented resolution. The extracted information is used to control the Pangolin Scale Dress interactively into 64 outputs.The dress is also inspired by the pangolin, cute, harmless animals sometimes known as scaly anteaters. They have large, protective keratin scales covering their skin (they are the only known mammals with this feature) and live in hollow trees or burrows.As such, Pangolins and considered an endangered species and some have theorized that the recent coronavirus may have emerged from the consumption of pangolin meat.Wipprecht’s main challenge in the project’s development was to not overload the dress with additional weight. She teamed up 3D printing experts Shapeways and Igor Knezevic in order to create an ‘exo-skeleton’ like dress-frame (3mm) that was light enough to be worn but sturdy enough to hold all the mechanics in place

ANOUK WIPPRECHT

Pangolin Kleid

Das Pangolin Scales Project demonstriert ein 1.024-Kanal-BCI (Brain-Computer Interface), das Informationen aus dem menschlichen Gehirn mit einer beispiellosen Auflösung extrahieren kann. Die extrahierten Informationen werden verwendet, um das Pangolin-Schuppenkleid interaktiv in 64 Ausgaben zu steuern. Das Kleid ist auch von den Pangolin-niedlichen, harmlosen Tieren inspiriert, die manchmal als schuppige Ameisenbären bekannt sind. Sie haben große, schützende Keratinschuppen auf ihrer Haut (sie sind die einzigen bekannten Säugetiere mit dieser Eigenschaft) und leben in hohlen Bäumen oder Höhlen. Als solche gelten Pangoline als gefährdete Arten, und einige haben angenommen, dass das jüngste Coronavirus möglicherweise entstanden ist Der Verzehr von Pangolinfleisch. Wipprechts größte Herausforderung bei der Entwicklung des Projekts bestand darin, das Kleid nicht mit zusätzlichem Gewicht zu überladen. Sie hat die 3D-Druckexperten Shapeways und Igor Knezevic zusammengebracht, um einen “Exo-Skelett“ -ähnlichen Kleiderrahmen (3 mm) zu schaffen, der leicht genug war, um getragen zu werden, aber robust genug, um alle Mechaniken an Ort und Stelle zu halten

FABRICA

Anerkennung

Recognition, Gewinner des IK-Preises 2016 für digitale Innovation, ist ein Programm für künstliche Intelligenz, das aktuellen Fotojournalismus mit britischer Kunst aus der Tate-Sammlung vergleicht. In drei Monaten vom 2. September bis 27. November wird Recognition eine ständig wachsende virtuelle Galerie schaffen: eine Zeitkapsel der Welt, die in verschiedenen Arten von Bildern aus Vergangenheit und Gegenwart dargestellt wird. Eine Ausstellung in der Tate Britain begleitet das Online-Projekt und bietet Besuchern die Möglichkeit um den Auswahlprozess der Maschine zu unterbrechen. Die Ergebnisse dieses Experiments – um zu sehen, ob eine künstliche Intelligenz aus den vielen persönlichen Reaktionen lernen kann, die Menschen beim Betrachten von Bildern haben – werden am Ende des Projekts auf dieser Website vorgestellt. Recognition ist ein Projekt von Fabrica für Tate; in Partnerschaft mit Microsoft, Inhaltsanbieter Reuters, Algorithmus für künstliche Intelligenz von Jolibrain.

Nohlab

Journey

JOURNEY is a 4 min. immersive audiovisual experience, telling the story of photons, primary elements of light, from the moment they approach the eye until the brain reconstructs them into perceivable forms. Our journey begins with the formation of photons in blank space, the colored photons approach the eye and we find ourselves in the capillary structure of Iris, the first layer of the eye. Next stop for the light particles is the Lens, which has a more crystalline form. We find ourselves in a refractive and fractalized environment. With an accelerating pace, we move towards a structure of many capillaries, aka optic nerves, gradually becoming thinner and eventually transmitting light particles towards neurons.

Antoni Rayzhekov and Katharina Köller

Somaphony

<somaphony> is composed of autogenous electronic objects that respond to stimuli and biofeedback wearable controllers. As it is connected with heart pulse, muscle tense, and movement of performers, real-time audiovisual visual composition is possible. The artist explores interdependence between digital equipment and performers that express behavior and cybernetic(artificial brain) relationship through this project.

Studio A N F

Computer Visions 2

After more decades of trying to construct an apparatus that can think, we may be finally witnessing the fruits of those efforts: machines that know. That is to say, not only machines that can measure and look up information, but ones that seem to have a qualitative understanding of the world. A neural network trained on faces does not only know what a human face looks like, it has a sense of what a face is. Although the algorithms that produce such para-neuronal formations are relatively simple, we do not fully understand how they work. A variety of research labs have also been successfully training such nets on functional magnetic resonance imaging (fMRI) scans of living brains, enabling them to effectively extract images, concepts, thoughts from a person’s mind. This is where the inflection likely happens, as a double one: a technology whose workings are not well understood, qualitatively analyzing an equally unclear natural formation with a degree of success. Andreas N. Fischer’s work Computer Visions II seems to be waiting just beyond this cusp, where two kinds of knowing beings meet in a psychotherapeutic session of sorts[…]

Karen Lancel and Hermen Maat

Kissing Data Symphony

Intimacy Data Symphony is a poetic ritual for intimate experience of Kissing and Caressing each other faces, multi-sensory and socially shared in public space of merging realities. In live experiments with Multi-Brain BCI E.E.G. head-sets, visitors are invited as Kissers (or Caressers) and Spectators. Brain activity of people kissing and caressing is measured and visualized in streaming E.E.G. data, real-time circling around them in a floor projection. Simultaneously, the Spectators brain waves are measured, their neurons mirroring activity of intimate kissing and caressing movements, resonating in their imagination. The Spectators brain activity data are interwoven in the data-visualization. Brain activity of all participants, mirroring each others emotional expressions and movements, in interpersonal and aesthetic ways, co-create an immersive visual, Reflexive Datascape.

Leontios Hadjileontiadis

Brainswarm

Brainswarm is a musical piece of biomusic that combines, in real time, information from the music director (both from his brain signals as well as from his movement) with the music from the instruments. This is the point where music meets biomedical sciences.

UVA UNITED VISUAL ARTISTS

Harmonics

Harmonics challenges our perception of light and sound unfolding at great speed, an illusion of time blending. As the two kinetic sculptures speed up, rotating beams of light blend to form volumes of colour, while multiple discrete sounds become a major chord. Unable to process extremely fast information, our brain reads sequential sensory inputs as a single event in time. A disconnected reality perceived as a continuum, a harmonious whole.

VIDEO

Lisa Park

Eunoia II

It is an interactive performance and installation that attempts to display invisible human emotion and physiological changes into auditory representations. The work uses a commercial brainwave sensor to visualize and musicalize biological signals as art. The real-time detected brain data was used as a means to self-monitor and to control. The installation is comprised of 48 speakers and aluminum dishes, each containing a pool of water. The layout of “Eunoia (Vr.2)” was inspired by an Asian Buddhist symbol meaning ‘balance.’ The motif of number 48 comes from Spinoza’s ‘Ethics’ (Chapter III), classifying 48 human emotions into three categories – desire, pleasure, and pain. In this performance, water becomes a mirror of the artist’s internal state. It aims to physically manifest the artist’s current states as ripples in pools of water.

BR41N.IO

Mindscapes

The BR41N.IO Hackathon brings together engineers, programmers, physicians, designers, artists or fashionistas, to collaborate intensively as an interdisciplinary team. They plan and produce their own fully functional EEG-based Brain-Computer Interface headpiece to control a drone, a Sphero or e-puck robot or an orthosis with motor imagery. Whenever they think of a right arm movement, their device performs a defined action. The artists among the hackers make artful paintings or post and tweet a status update. And hackers who are enthusiasts in tailoring or 3D printing give their BCI headpiece an artful and unique design. And finally, kids create their very own ideas of an interactive head accessory that is inspired by animals, mythical creatures or their fantasy.

Dragan Ilic

A3 K3

A3 K3 is a unique interactive experience. Artworks are created by machine technology and audience participation. Dragan Ilić uses g.tec’s brain-computer interface (BCI) system where he controls a hi-tech robot with his brain. The artist and the audience draw and paint on a vertical and a horizontal canvas with the assistance of the robot. The robotic arm is fitted with DI drawing devices that clamp, hold and manipulate various artistic media. They can then create attractive, large-format artworks. Ilić thus provides a context in which people will be able to enhance and augment their abilities in making art.

Void

Abysmal

Abysmal means bottomless; resembling an abyss in depth; unfathomable. Perception is a procedure of acquiring, interpreting, selecting, and organizing sensory information. Perception presumes sensing. In people, perception is aided by sensory organs. In the area of AI, perception mechanism puts the data acquired by the sensors together in a meaningful manner. Machine perception is the capability of a computer system to interpret data in a manner that is similar to the way humans use their senses to relate to the world around them. Inspired by the brain, deep neural networks (DNN) are thought to learn abstract representations through their hierarchical architecture. The work mostly shows the ‘hidden’ transformations happening in a network: summing and multiplying things, adding some non-linearities, creating common basic structures, patterns inside data. It creates highly non-linear functions that map ‘un-knowledge’ to ‘knowledge’.

TUNDRA

My Whale

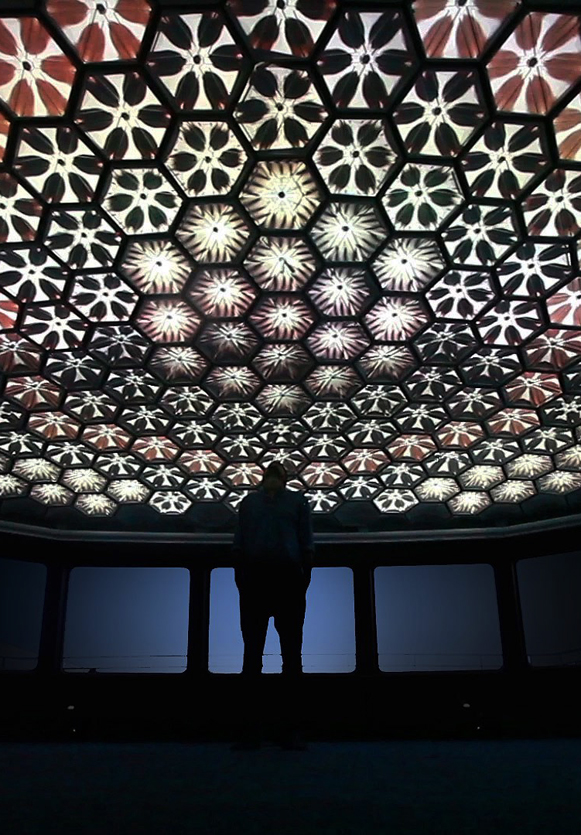

There is an impressive space at the front of the ship, with panoramic windshield and hexagonal pattern on the vaulted ceiling, remained from the 80-s, the time, when “Brusov” was constructed in Austria. Standing there gives you the feeling of floating through the reflections of the Krymsky bridge lights on the river, inside a giant whale head. Looking through its eyes, listening to its songs that flow across the brain made of hexagonal cells by the wires hanging down here and there.

With some light and sound we brought this whale to life.

Each piece of the projection onto the cells was cloned from the previous one with a random changes. So each cell behaved differently, pulsating to the rythm of the whale songs. To interract with the whale the visitor could place the phone screen above the black box in the center of the room.

Christoph De Boeck

Staalhemel

The intimate topography of the brain is laid out across a grid of 80 steel ceiling tiles as a spatialized form of tapping. The visitor can experience the dynamics of his cognitive self by fitting a wireless EEG interface on his head, that allows him to walk under the acoustic representation of his own brain waves.The accumulating resonances of impacted steel sheets generates penetrating overtones. The spatial distribution of impact and the overlapping of reverberations create a very physical soundspace to house an intangible stream of consciousness.‘Staalhemel’ (‘steel sky’, 2009) articulates the contradictory relationship we entertain with our own nervous system. Neurological feedback makes that the cognitive focus is repeatedly interrupted by the representation of this focus. Concentrated thinking attempts to portray itself in a space that is reshaped by thinking itself nearly every split second.

Guy Ben-Ary

CellF

“CellF is the world’s first neural synthesiser. Its “brain” is made of biological neural networks that grow in a Petri dish and controls in real time it’s “body” that is made of an array of analogue modular synthesizers that work in synergy with it and play with human musicians. It is a completely autonomous instrument that consists of a neural network that is bio-engineered from my own cells that control a custom-built synthesizer. There is no programming or computers involved, only biological matter and analogue circuits; a ‘wet-analogue’ instrument.”Guy Ben-Ary

Maurice Benayoun

Maurice Benayoun and Tobias Klein

Brain Factory Prototype 2

Brain Factory is an installation that allows the audience to give a shape to human abstractions through Brain-Computer Interaction (BCI), and then to convert the resulting form into a physical object. The work examines the human specificity through abstract constructs such as LOVE, FREEDOM, and DESIRE. The project articulates the relationship between thought and matter, concept and object, humans and machine. Brain Factory uses Electroencephalography (EEG) data captured by BCI. As a brain activity is unique, we developed a novel calibration process of the individual data readings and associated emotional responses within a framework of binary outcomes. This is key for a real-time feedback – a biofeedback – between the virtual generative processes and the brain’s associated response.

Marta Revuelta

AI Facial Profiling, Levels of Paranoia

Behnaz Farahi

Synapse

Synapse is a 3D-printed helmet which moves and illuminates according to brain activity[…] The main intention of this project is to explore the possibilities of multi-material 3D printing in order to produce a shape-changing structure around the body as a second skin. Additionally, the project seeks to explore direct control of the movement with neural commands from the brain so that we can effectively control the environment around us through our thoughts. The environment therefore becomes an extension of our bodies. This project aims to play with the intimacy of our bodies and the environment to the point that the distinction between them becomes blurred, as both have ‘become’ a single entity. The helmet motion is controlled by the Eletroencephalography (EEG) of the brain. A Neurosky’s EEG chip and Mindflex headset have been modified and redesigned in order to create a seamless blend between technology and design.

christopher bauder

skalar

SKALAR is a large-scale art installation that explores the complex impact of light and sound on human perception. Light artist Christopher Bauder and musician Kangding Ray give an audio-visual narration of radiant light vector drawings and multi-dimensional sound inside the pitch-dark industrial space of Kraftwerk Berlin. By combining a vast array of kinetic mirrors, perfectly synchronized moving lights and a sophisticated multi-channel sound system, SKALAR reflects on the fundamental nature and essence of basic human emotions.

KATAGAI Hazuki

Accessories for Wearing Emotions

Head Accessory of Tears

I have heard somewhere that it is not yet fully understood why people shed tears.

We shed tears when feeling sad, moved, sometimes happy.

I do even when feeling angry.

We cannot control our emotions.

They sometimes cannot be stopped from overflowing to the outside even before we internally register them although they should exist within and surely derive from the inside of us.

It is as if they do not even pass through our brain – As if it is an involuntary action,

like in sports, where the body moves before we tell it to.

However, what would happen when we trace the process in reverse?

I tried to make it work from the outside in.

(Like, we sometimes pretend to be okay with an unwilling smile.)

I want to see what would happen on the inside of us if we “wear” emotions on the outside.

Hazuki Katagai

Greg Dunn and Brian Edward

Self-Reflected

Dr. Greg Dunn (artist and neuroscientist) and Dr. Brian Edwards (artist and applied physicist) created Self Reflected to elucidate the nature of human consciousness, bridging the connection between the mysterious three pound macroscopic brain and the microscopic behavior of neurons. Self Reflected offers an unprecedented insight of the brain into itself, revealing through a technique called reflective microetching the enormous scope of beautiful and delicately balanced neural choreographies designed to reflect what is occurring in our own minds as we observe this work of art. Self Reflected was created to remind us that the most marvelous machine in the known universe is at the core of our being and is the root of our shared humanity.

Laura Jade

B R A I N L I G H T

“The catalyst for this research project was my flourishing intrigue and desire to harnesses my own Brain as the creator of an interactive art experience where no physical touch was required except the power of my own thoughts. To experience a unique visualisation of brain activity and to share it with others I have created a large freestanding brain sculpture that is made of laser cut Perspex hand etched with neural networks that glow when light is passed through them.” Laura Jade

bernd hopfengaertner

BELIEF SYSTEMS

Facial micro expressions last less than a second and are almost impossible to control. They are hard wired to the emotional activity in the brain which can be easily captured using specially developed technological devices. Free will is now in question as the science exposes decision-making as an emotional process rather than a rational one. This ability to read emotions technologically result in a society obsessed with their emotional reactions. Emotions, convictions and beliefs which usually remain hidden, now become a public matter. “Belief systems” is a video scenario about a society that responds to the challenges of modern neuroscience by embracing these technological possibilities to read, evaluate and alter peoples behaviours and emotions.

Greg Dunn

brain art

To capture their strikingly chaotic and spontaneous forms, the neurons in Self Reflected are painted using a technique wherein ink is blown around on a canvas using jets of air. The resulting ink splatters naturally form fractal like neural patterns, and although the artist learns to control the general boundaries of the technique it remains at its heart a chaotic, abstract expressionist process.

GUY BEN-ARY, PHILIP GAMBLEN AND STEVE POTTER

Silent Barrage

Silent Barrage has a “biological brain” that telematically connects with its “body” in a way that is familiar to humans: the brain processes sense data that it receives, and then brain and body formulate expressions through movement and mark making. But this familiarity is hidden within a sophisticated conceptual and scientific framework that is gradually decoded by the viewer. The brain consists of a neural network of embryonic rat neurons, growing in a Petri dish in a lab in Atlanta, Georgia, which exhibits the uncontrolled activity of nerve tissue that is typical of cultured nerve cells. This neural network is connected to neural interfacing electrodes that write to and read from the neurons. The thirty-six robotic pole-shaped objects of the body, meanwhile, live in whatever exhibition space is their temporary home. They have sensors that detect the presence of viewers who come in. It is from this environment that data is transmitted over the Internet, to be read by the electrodes and thus to stimulate, train or calm parts of the brain, depending on which area of the neuronal net has been addressed.

David Szauder

Failed memories

‘Our brains store away images to retrieve them later, like files stored away on a hard drive. But when we go back and try to re-access those memories, we may find them to be corrupted in some way.’

Danny Hillis

parallel supercomputer

Connection Machine CM-1(1986) and CM-2 (1987)

The Connection Machine was the first commercial computer designed expressly to work on “artificial intelligence” problems simulating intelligence and life. A massively parallel supercomputer with 65,536 processors, it was the brainchild of Danny Hillis, conceived while he was a doctoral student studying with Marvin Minsky at the MIT Artificial Intelligence Lab. In 1983 Danny founded Thinking Machines Corporation to build the machine, and hired me to lead the packaging design group. Working with industrial design consultants Allen Hawthorne and Gordon Bruce, and mechanical engineer consultant Ted Bilodeau, our goal was to make the machine look like no other machine ever built. I have described that journey in this article, published in 1994 in the DesignIssues journal and republished in 2010 in the book The Designed World.

Santiago Ramón y Cajal

purkinje neuron from the human cerebellum

Ramón y Cajal’s theory described how information flowed through the brain. Neurons were individual units that talked to one another directionally, sending information from long appendages called axons to branchlike dendrites, over the gaps between them.

He couldn’t see these gaps in his microscope, but he called them synapses, and said that if we think, learn and form memories in the brain then that itty-bitty space was most likely the location where we do it. This challenged the belief at the time that information diffused in all directions over a meshwork of neurons.

isabel nolan

Turning Point

Isabel Nolan’s artwork utilizes textiles, steel rods, and primary colored paint to approach questions of anxiety, current events, and the human condition. Her work has a particularly erudite quality, with materials teased and propped to mimic symbolism and images in literature, historical texts, science, and art. Nolan’s work has been exhibited throughout her native Ireland and wider Europe, including at the Irish Museum of Modern Art and the Musée d’art modern de Saint Etienne. With her first solo exhibition in the United States fast approaching, artnet News caught up with the scholarly artist to hear about her early diagrams of brains and ideas she is currently entertaining for her next body of work.

XAVIER DELORY

泽维尔底洛瑞

Ксавье Делори

Formes Urbaines, or Urban Forms, is the brainchild of photographer Xavier Delory, who states that the purpose of this ongoing project is “to study the recurrent characteristics of modern cities.” At first glance, the viewer considers whether the buildings in his images are real, though a prolonged study assures us that indeed, this is a commentary on the evolution of modern architecture. The wafer-thin office building, the façades that lack their buildings behind them, an apartment house that screams whimsy with its inverse construction, the biggest flat on top—all of these images were created from actual photographs and then digitally manipulated to achieve the desired effect.

Tavares Strachan

塔瓦雷斯斯特拉坎

ТАВАРЕС СТРАЧАН

Invisible Astronaut

“This neon and glass sculpture represents the cardiovascular system of astronaut Sally Ride shortly after her return to earth. The human body adapts to periods of prolonged weightlessness. When gravity suddenly returns, the blood pools in the lower extremities, and blood pressure drops. This condition is known as orthostatic intolerance. Hanging upside down can cause blood to pool around the heart, a potentially lethal condition. The awkward position shown above helps blood reach the brain without causing pooling around the heart.”

XAVIER DELORY

泽维尔底洛瑞

Ксавье Делори

Urban Forms

Formes Urbaines, or Urban Forms, is the brainchild of photographer Xavier Delory, who states that the purpose of this ongoing project is “to study the recurrent characteristics of modern cities.” At first glance, the viewer considers whether the buildings in his images are real, though a prolonged study assures us that indeed, this is a commentary on the evolution of modern architecture.

BOHYUN YOON

БОХЬЮН ЮН

윤보현

Transparent Business Suit

“There is much to support the view that it is clothes that wear us and not we them; they mold our hearts, our brains, our tongues to their liking.” -Virginia Woolf. Uniforms group people in simplified versions of our social strata and take away our identity and individuality. In my transparent suit, I wanted to break the rigid impositions of the formal suit. Therefore, I juxtaposed the suit of a businessman and the naked body.

BOUNCE STREETDANCE COMPANY

Insane in the brain

‘Insane in the Brain’ is a reworked producion of One Flew Over the Cuckoo’s Nest as a feast of stunning street dance. Injecting a healthy dose of contemporary styles fused with breathtaking hip hop moves. ‘Insane in the Brain’ features a pulsating soundtrack with cuts from Missy Elliot, Dizzee Rascal, Gotan Project, David Holmes and Cypress Hill. Inventive set design and choreography are mixed with film and multimedia sequences to produce a fast-paced show that is funny, moving and packed with high-octane dance.

LISA PARK

Лиза Парк

eunoia

“Eunoia” is a performance that uses my brainwaves — collected via EEG sensor– to manipulate the motions of water. It derives from the Greek word “ey” (well) + “nous” (mind) meaning “beautiful thinking”. EEG is a brainwave detecting sensor. It measures frequencies of my brain activity (Alpha, Beta, Delta, Gamma, Theta) relating to my state of consciousness while wearing it. The data collected from EEG is translated in realtime to modulate vibrations of sound with using software programs. EEG sends the information of my brain activity to Processing, which is linked with Max/MSP to receive data and generate sound from Reaktor.

Paolo Chiasera

Black Brain 3